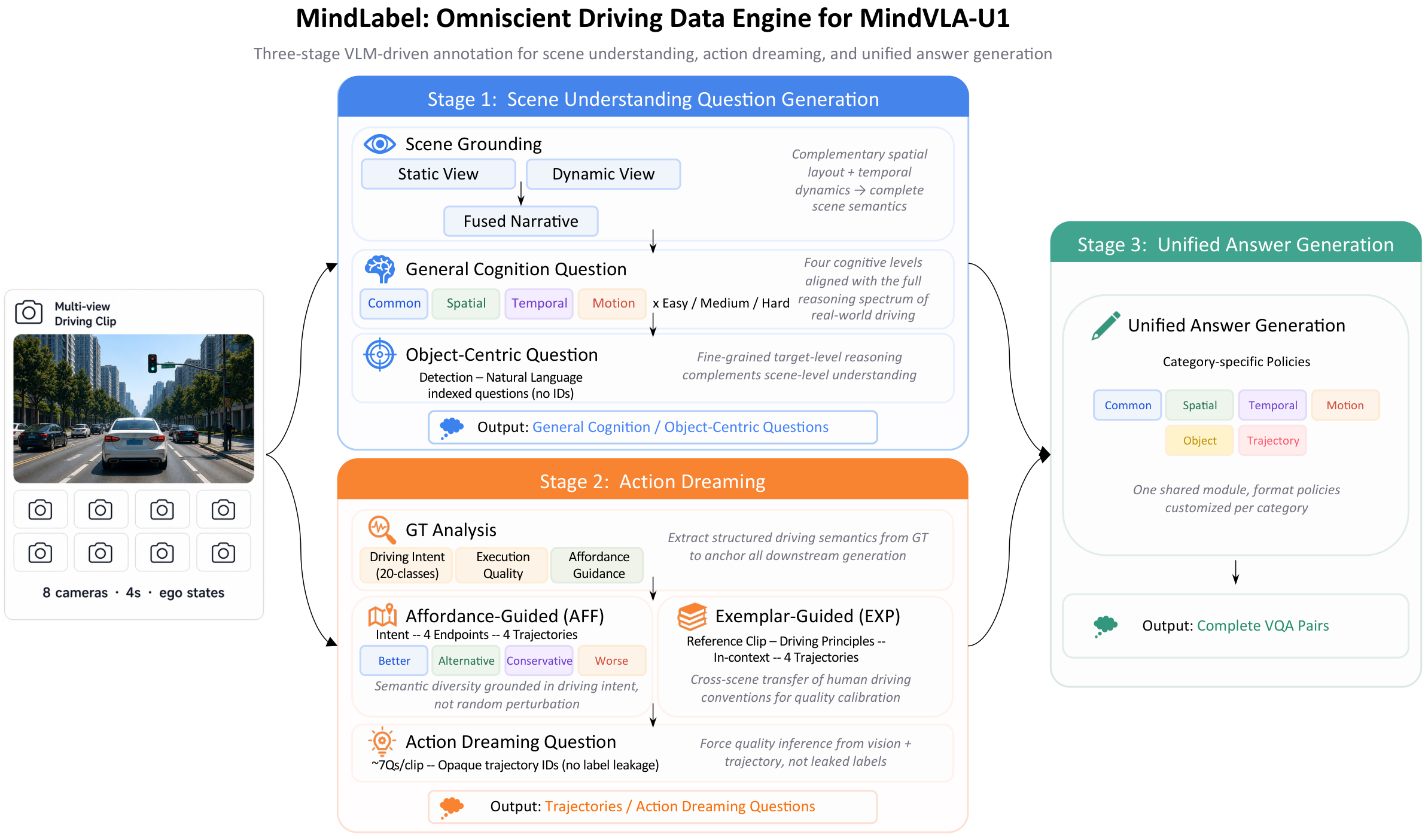

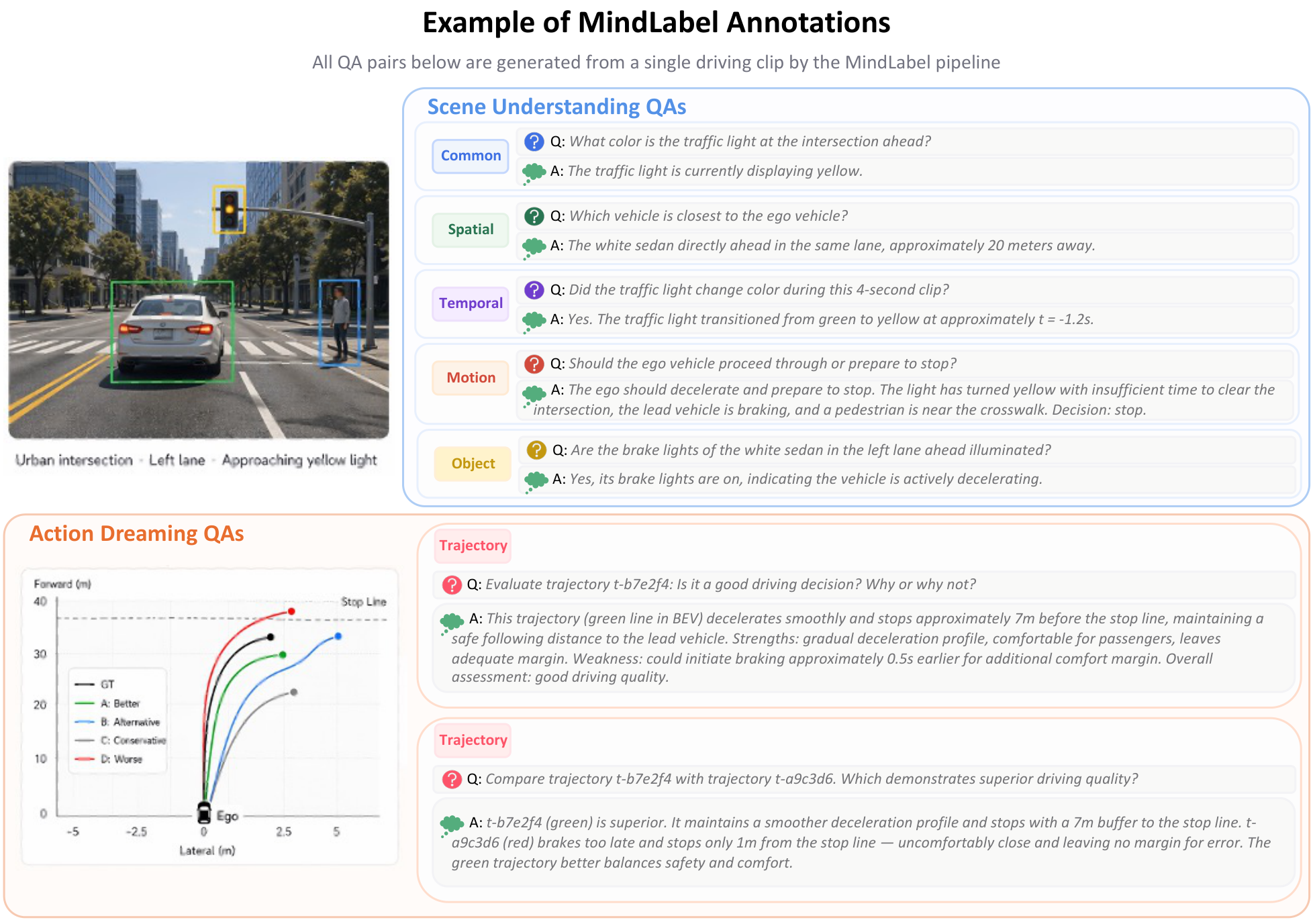

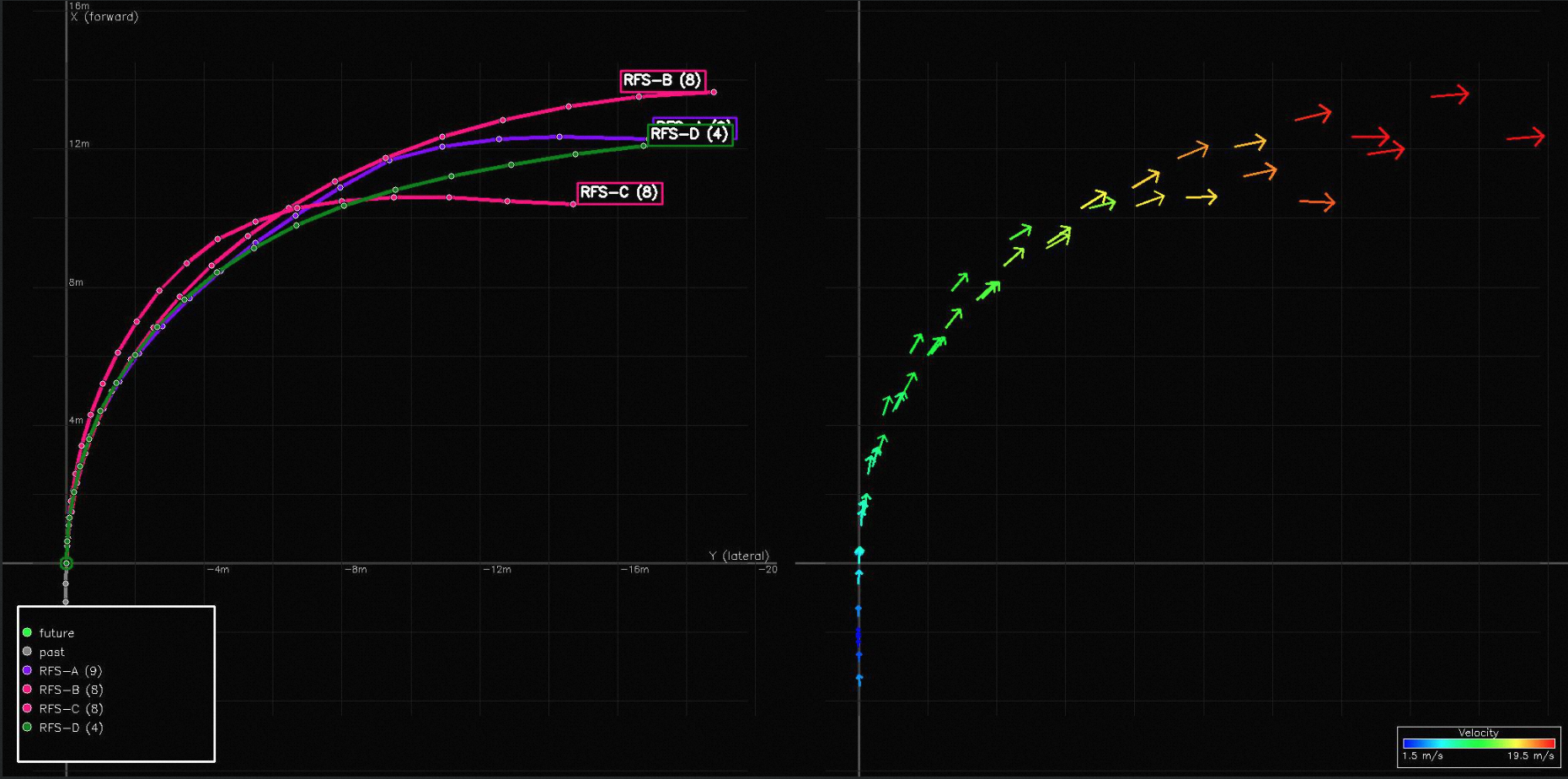

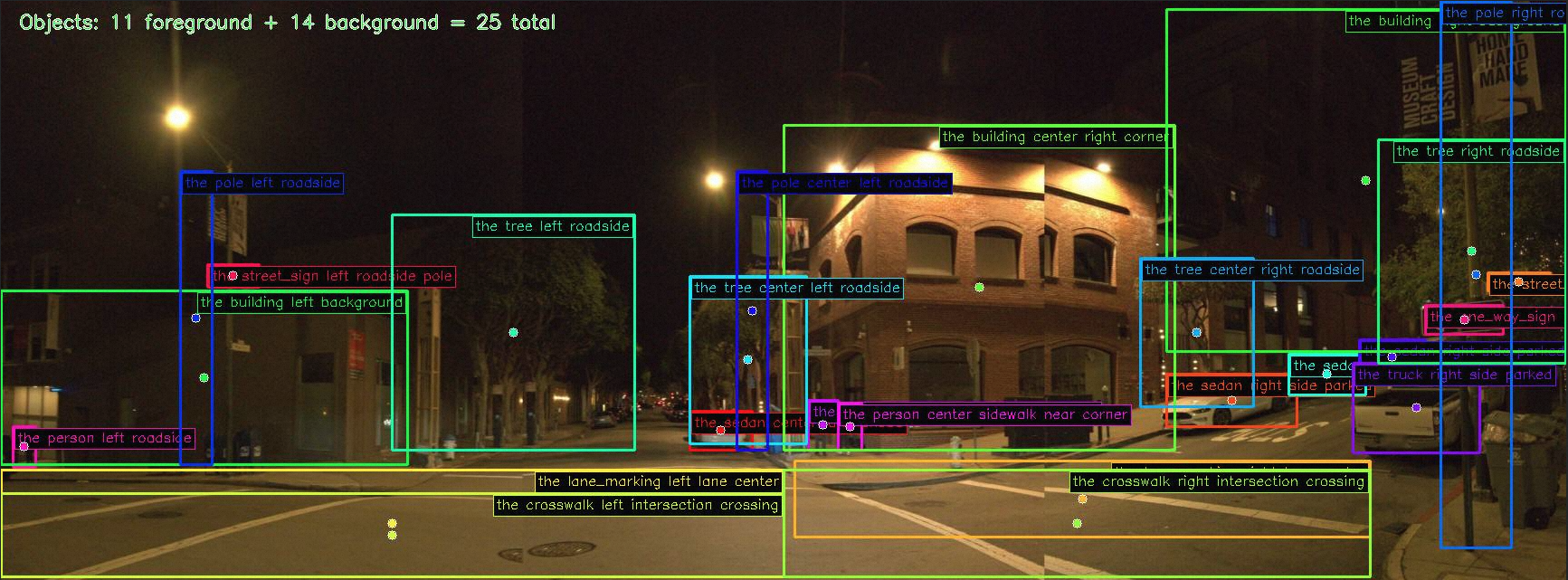

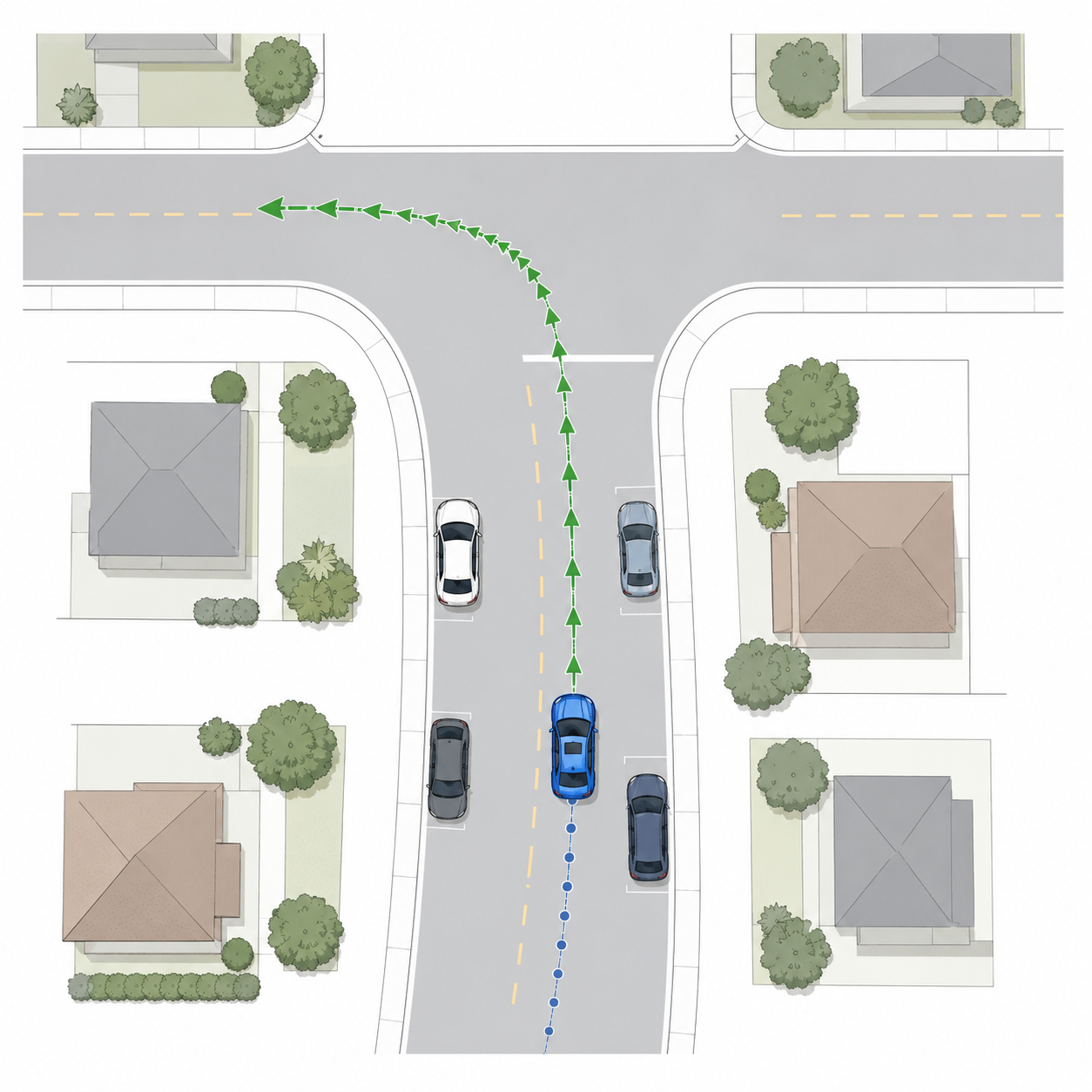

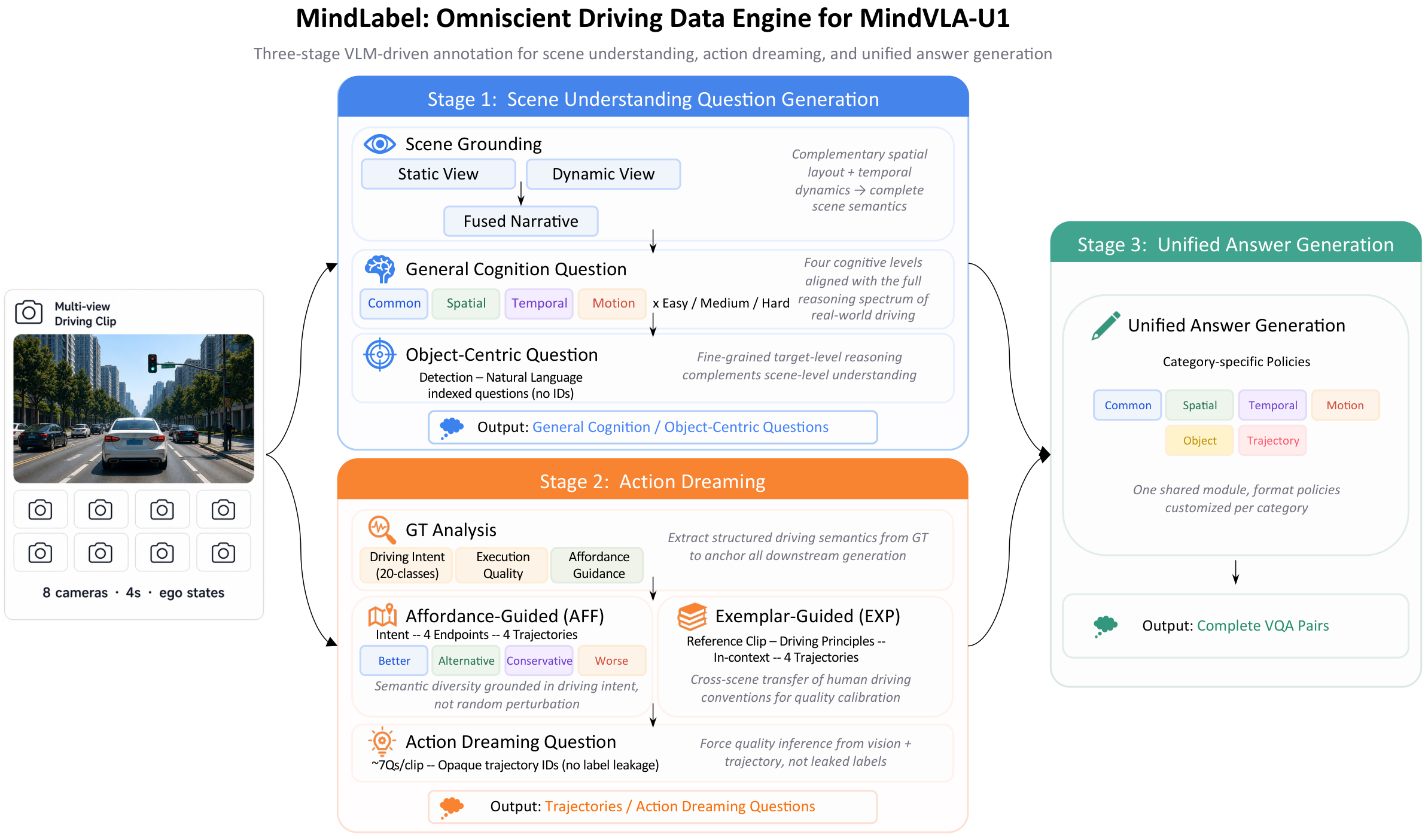

An auto-labeling pipeline run on WOD-E2E frames with two parallel branches — Scene Understanding VQA (Common · Spatial · Temporal · Motion · Object-Centric) and Action Dreaming (intent-conditioned trajectory synthesis with affordance / exemplar guidance + trajectory-evaluation QA) — feeding a unified answer-generation module. Main results consume only the basic VQA + 3-class GT intent; the richer outputs are released for the community.